In the ever-evolving landscape of artificial intelligence, managing GPU and CPU resources efficiently is paramount. With the open-sourcing of its KAI Scheduler, Nvidia isn’t just allowing access to a cutting-edge tool; it’s making a bold statement about its commitment to enhancing AI infrastructure. This enables organizations to harness the power of collaborative development while simultaneously pushing the boundaries of what is achievable in machine learning (ML) and artificial intelligence workflows.

The Significance of Open Source in AI

Nvidia’s decision to develop and share the KAI Scheduler under the Apache 2.0 license serves as a testimony to the company’s dedication to fostering community involvement in AI development. Open sourcing elements of the Run:ai platform provides an opportunity for collaboration, which can solve real-world challenges in AI development. By inviting external contributions and feedback, Nvidia opens the door for innovation that can benefit a broader audience. This move is particularly strategic in an industry that thrives on rapid advancements and diverse problem-solving approaches.

A Deeper Look at KAI Scheduler’s Operational Mechanics

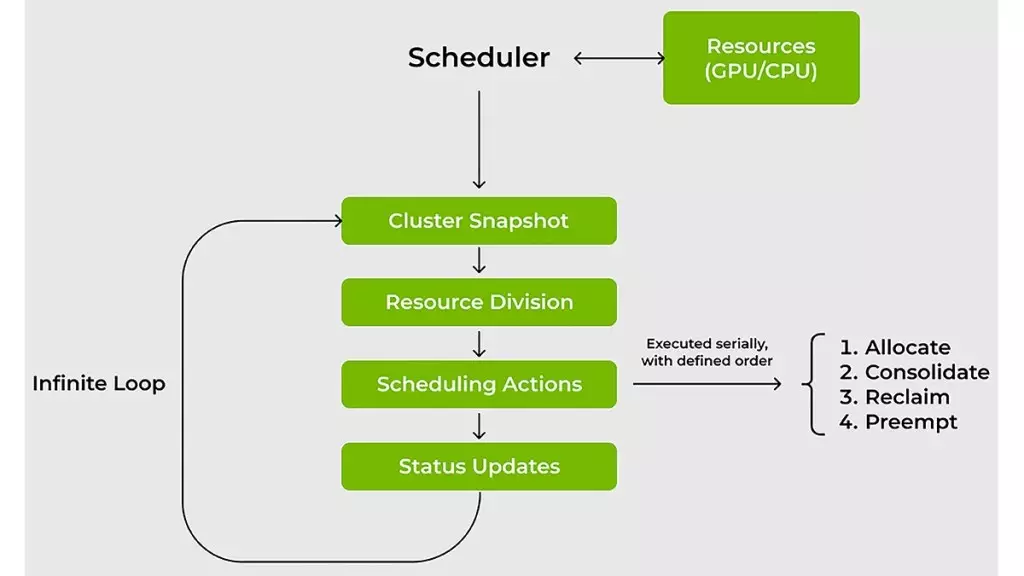

The KAI Scheduler is specifically designed to overcome the limitations posed by traditional resource schedulers in managing complex AI workloads. These workloads are notoriously unpredictable, with varying demands that can change based on the project phase—ranging from interactive data exploration to the need for extensive resources for distributed training. Traditional schedulers can struggle to adapt swiftly to these fluctuations, potentially leading to inefficiencies.

Unlike conventional scheduling methods, KAI Scheduler implements an innovative, dynamic recalibration mechanism that continuously evaluates fair-share values and adjusts resource allocations in real-time. This agile approach not only enhances the efficient use of GPUs but also mitigates the need for constant oversight from system administrators, allowing them to devote more time to critical aspects of the project.

Speeding Up Machine Learning Processes

For ML engineers, efficiency can significantly impact project timelines. The KAI Scheduler’s design integrates gang scheduling, GPU sharing, and a hierarchical queuing system, streamlining the job submission process. This integration allows engineers to submit multiple tasks simultaneously and leave the rest to the scheduler, knowing that jobs will be executed as soon as resources become available. This level of automation directly correlates with increased productivity and reduced wait times, ultimately accelerating the development process.

Smart Resource Allocation

In shared computing environments, the tendency for resource hogging can stymie collaboration and reduce overall productivity. Traditional practices often see teams securing more GPUs than necessary to guarantee availability, resulting in idle resources that are simply unutilized. The KAI Scheduler mitigates this issue through stringent resource guarantees, ensuring that allocated GPUs are effectively used while reallocating idle resources to other teams when appropriate. This not only fosters an equitable environment but also maximizes overall cluster efficiency.

Simplifying Complex Connections Across AI Frameworks

Integrating various AI workloads can be a technologically daunting task, typically requiring extensive manual configurations to connect with tools like Kubeflow, Ray, and Argo. KAI Scheduler significantly simplifies this process with its built-in podgrouper feature. This capability allows for automatic detection and connection with different AI frameworks, minimizing configuration hurdles that often delay project timelines. Such seamless interactions decrease the barrier to entry for teams experimenting with new methodologies and technologies, ultimately paving the way for faster iteration cycles.

The Path Forward for AI Development

As Nvidia continues to refine its offerings and encourage community participation, tools like the KAI Scheduler represent a pivotal shift towards more efficient, collaborative, and innovative AI development ecosystems. The potential impact is monumental, establishing an environment where creativity can thrive, driven by intelligent resource management and facilitated connections across diverse tools and frameworks. In the long term, this could reshape how organizations approach AI projects, enabling them to respond to customer needs and market demands more swiftly and effectively.

Leave a Reply