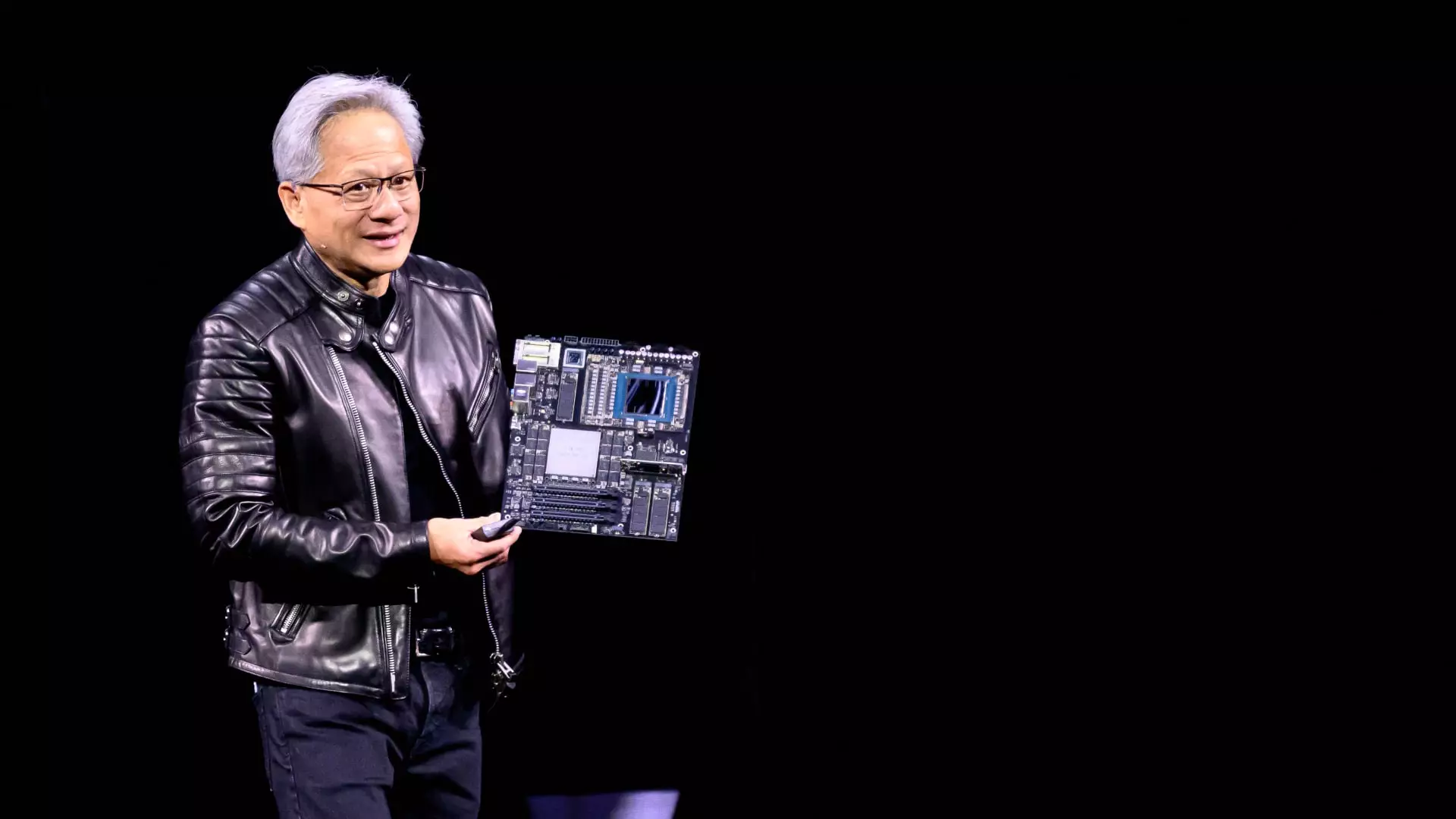

At the recent GTC conference, Nvidia CEO Jensen Huang delivered an electrifying keynote that painted a compelling picture of the future of artificial intelligence (AI). Stepping away from a scripted presentation, Huang emphasized one pivotal point: the need for speed. His assertion that acquiring the fastest chips available could change the landscape for cloud providers and AI companies alike is both ambitious and grounded in the realities of technological advancement. Speed, he argues, is not merely an operational parameter—it is the key cost-reduction mechanism that will drive the next decade of AI infrastructure development.

Huang’s belief that faster chips will eliminate hesitations surrounding cost and return on investment is rooted in the concept of efficiency. With chips that accelerate processing capabilities exponentially, companies will find themselves able to serve AI applications to millions—a radical improvement over current performance metrics. This shift could redefine the financial equations that companies have relied upon, converting once daunting expenses into manageable investments.

A New Economic Framework

In an era where every dollar counts, Huang took the time to break down the economics of faster GPUs for hyperscale cloud providers, unveiling a new metric: cost-per-token. This measure, which evaluates the expenses incurred for producing a unit of AI output, underscores a transformative approach to evaluating chip performance. By putting actual numbers on a napkin during his talk, he catered to an audience riddled with financial queries, illustrating that the future could financially favor those who invest in Nvidia’s innovations.

The significance of this approach can’t be overstated. As AI capabilities rapidly evolve, the return on investment for cutting-edge chips may become clearer and more compelling, drawing more players into the fold. Firms eyeing thousands of dollars of investment will be more inclined to allocate funds accordingly, given the tangible metrics presented by Huang. The market for AI infrastructure is not just vast; it is on the brink of an explosion, with estimates of several hundred billion dollars earmarked for investment in the coming years.

The Competitive Landscape and Nvidia’s Edge

Despite the booming AI chip market, skeptics have emerged questioning whether custom chips developed by major cloud providers like Microsoft, Google, and Amazon could potentially rival Nvidia’s GPUs. Huang met this skepticism head-on. He stated that such custom chips, often dubbed ASICs (Application-Specific Integrated Circuits), lack the flexibility required to adapt to the fast-paced evolution of AI algorithms, a crucial factor in maintaining competitiveness.

Huang’s dismissal of the widespread adoption of ASICs as a threat is reflective of his confidence in Nvidia’s ongoing R&D and chip development strategies. The conversation around ASICs serves as a reminder that while companies may invest heavily in bespoke hardware to optimize their operations, the breadth and versatility offered by Nvidia’s solutions, like the upcoming Blackwell Ultra system, will likely uphold their dominance. Huang’s assertion—”A lot of ASICs get canceled”—hints at the turbulent nature of custom hardware development that often clouds ambitious projects.

Gearing Up for the Future

The unveiling of Nvidia’s roadmap extending into the years 2027 and 2028, featuring chips like Rubin Next and Feynman, signals a proactive approach to market needs. Huang’s acknowledgment that cloud customers are already laying groundwork for formidable data center projects illustrates the urgency and readiness of the AI sector to evolve rapidly. The approval of budgets and infrastructure signals a clear recognition among industry leaders that AI isn’t merely a tool for efficiency; it is a route toward market transformation.

Ultimately, the investment landscape is set to shift as companies grapple with the realities of building expansive AI infrastructures. Huang’s insistence that companies like Nvidia must remain at the forefront of innovation poses an exciting challenge. “What do you want for several hundred billion dollars?” he provocatively asked, stirring the imagination of those with the vision to invest wisely. The choices made in the coming years will likely shape the future trajectory of not just AI, but the very fabric of technological progress itself.

In this bold narrative, Huang illuminates a path that champions speed, efficiency, and innovation as the cornerstones of what is to come. His perspective encapsulates a moment where the only limit lies in our willingness to embrace the future of computing with open arms and an informed mindset. The revolution is not just encouraged; it is inevitable.

Leave a Reply